AI factories become infrastructure systems, not server clusters

NVIDIA links Vera Rubin, Dynamo and DSX into a broader AI factory system built around efficiency, simulation and agentic workloads.

NVIDIA is broadening its infrastructure pitch from chips and racks to the design, operation and economics of entire AI facilities. Its latest set of announcements ties together the Vera Rubin platform, the DSX reference design, Omniverse-based digital twins and Dynamo inference software into a single argument about how AI factories should be built.

Table Of Content

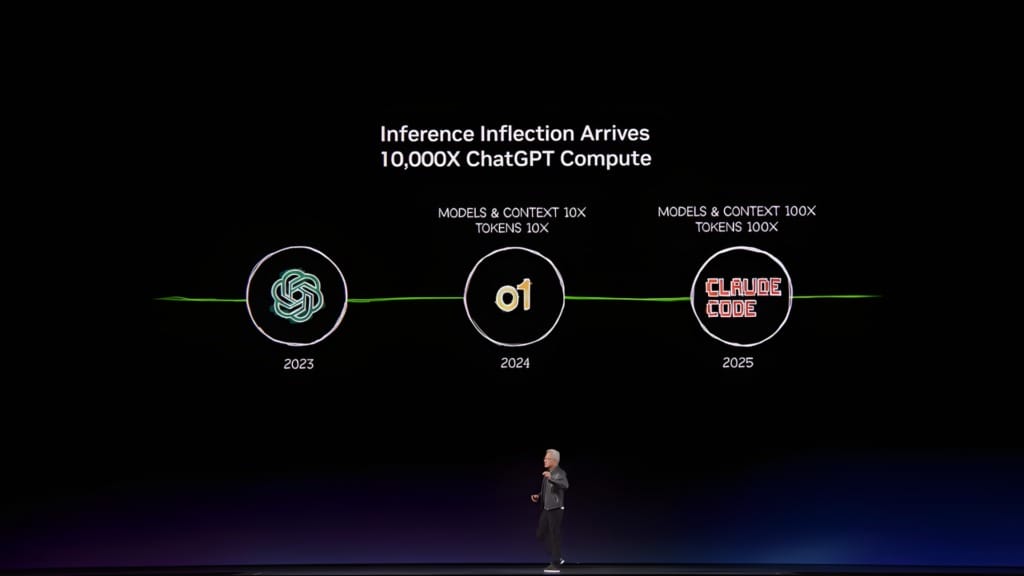

The core idea is straightforward. Large AI deployments are no longer defined only by how many GPUs they contain, but by how efficiently compute, storage, networking, cooling and power are coordinated once those systems are running.

DSX turns infrastructure into a blueprint

The most concrete part of NVIDIA’s argument is the Vera Rubin DSX AI Factory reference design. Rather than treating infrastructure as a collection of separate procurement choices, NVIDIA is offering a guide for how compute, networking, storage, power and cooling should fit together as one system.

That reference design is paired with the Omniverse DSX Blueprint, which allows operators to build digital twins of AI factories before deployment. NVIDIA says this makes it possible to model layouts, thermal behaviour, power distribution and operational policies before hardware arrives, reducing the risk of building large systems through trial and error.

“In the age of AI, intelligence tokens are the new currency, and AI factories are the infrastructure that generates them,” said Jensen Huang, founder and CEO of NVIDIA. “With the NVIDIA Vera Rubin DSX AI Factory reference design and Omniverse DSX Blueprint, we are providing the foundation to build the world’s most productive AI factories, accelerating time to first revenue and maximizing scale and energy efficiency.”

The DSX stack is meant to support that architecture at runtime as well as during planning. Components such as DSX Max-Q, DSX Flex and DSX Exchange are intended to help operators balance compute output, energy use and coordination between IT systems and facility operations.

This is where NVIDIA’s infrastructure story becomes more ambitious. It is not just selling equipment into data centres, it is defining how those facilities should be simulated, connected and managed once AI workloads become continuous and power-intensive.

Vera Rubin is the rack-scale anchor

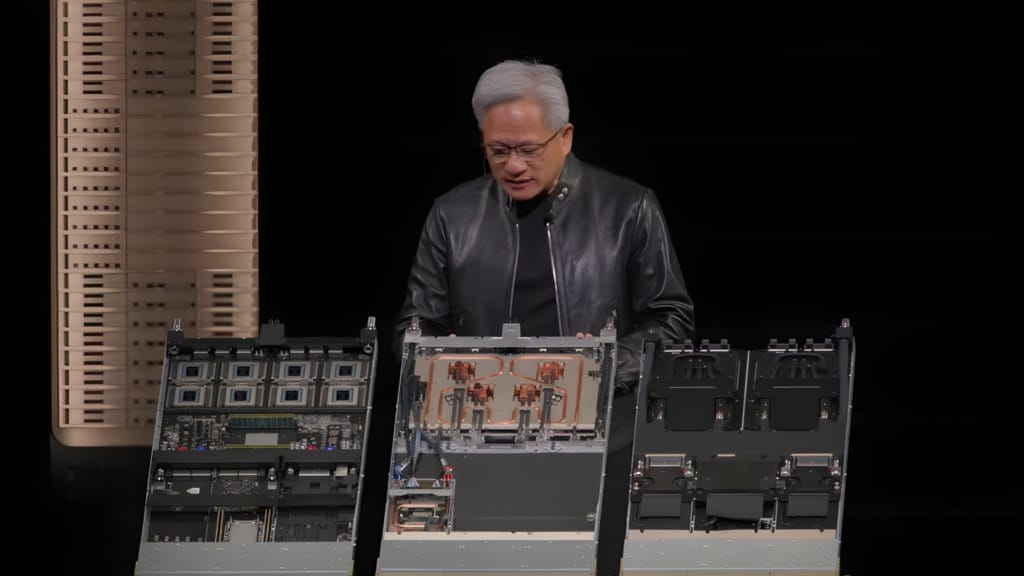

The reference design matters because NVIDIA is pairing it with a rack-scale platform that is meant to operate as a coherent system rather than as discrete hardware. Vera Rubin combines compute, networking and storage components into a pod-scale architecture built for different phases of AI, including pretraining, post-training and agentic inference.

The company’s headline system is the Vera Rubin NVL72 rack, which integrates 72 Rubin GPUs and 36 Vera CPUs. NVIDIA says it can train large mixture-of-experts models with one-fourth the number of GPUs compared with the Blackwell platform, while delivering up to 10x higher inference throughput per watt at one-tenth the cost per token.

That matters less as a spec sheet than as an example of where NVIDIA wants infrastructure decisions to happen. The company is moving the conversation away from standalone accelerators and towards racks, pods and factory-scale deployments that are assembled and optimised as a whole.

“Vera Rubin is a generational leap — seven breakthrough chips, five racks, one giant supercomputer — built to power every phase of AI,” Huang said. He added that “the agentic AI inflection point has arrived with Vera Rubin kicking off the greatest infrastructure buildout in history.”

The early customer list also makes clear where NVIDIA expects this system to land first. Cloud providers such as Amazon Web Services, Google Cloud, Microsoft Azure and Oracle Cloud Infrastructure are among the named partners, alongside infrastructure specialists such as CoreWeave and Nebius.

Dynamo puts inference into the control plane

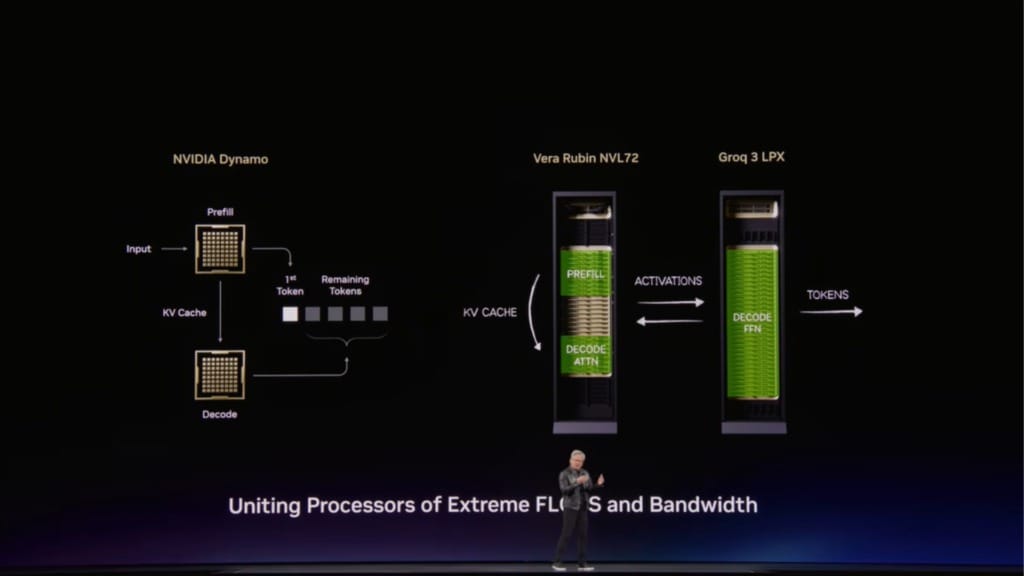

If Vera Rubin is the hardware anchor, Dynamo is the software layer that makes the factory argument more believable. NVIDIA is describing Dynamo 1.0 as an inference operating system that coordinates GPU and memory resources across clusters, handling the uneven and long-running workloads that come with generative and agentic AI.

That is a meaningful change in emphasis. Instead of assuming that better hardware alone will solve inference demand, NVIDIA is treating orchestration as a core part of infrastructure performance. Requests arrive with different lengths, modalities and latency requirements, and the software layer now determines how well those resources are used.

According to NVIDIA, Dynamo can split inference work across GPUs, move data between GPUs and lower-cost storage, and route requests to systems that already hold the most relevant short-term memory from earlier steps. That is intended to reduce wasted work and ease the memory pressure that builds up in longer reasoning and retrieval-heavy workloads.

“Inference is the engine of intelligence, powering every query, every agent and every application,” said Huang. “With NVIDIA Dynamo, we’ve created the first-ever ‘operating system’ for AI factories. The rapid adoption across our ecosystem shows this next wave of agentic AI is here, and NVIDIA is powering it at global scale.”

NVIDIA says recent benchmarks showed Dynamo improving Blackwell inference performance by up to 7x. More importantly, the company is presenting those gains as something that can come from free, open source software layered onto existing infrastructure, not only from deploying new hardware.

The partner list around Dynamo is large, but the underlying point is simple. NVIDIA wants inference software to become part of the control plane for AI facilities, not just a developer optimisation layer.

CPUs and storage matter more here

One of the clearer messages in these announcements is that AI factory design no longer revolves around GPUs alone. NVIDIA’s Vera CPU and BlueField-4 STX storage architecture are both aimed at the kinds of workloads that emerge when AI systems reason over longer contexts, interact with tools and run more continuously.

Vera is built for data processing, reinforcement learning and agentic inference, with NVIDIA saying it delivers results twice as efficiently and 50% faster than traditional rack-scale CPUs. A new rack design integrates 256 liquid-cooled Vera CPUs and is meant to support large numbers of concurrent CPU environments alongside GPU systems.

BlueField-4 STX addresses a different bottleneck. NVIDIA says traditional storage architectures do not provide the responsiveness needed for long-context reasoning, and is using STX as a modular reference architecture for keeping context data closer to the compute layer.

The company claims that the first rack-scale implementation, built around a context memory storage platform, can provide up to 5x tokens per second compared with traditional storage. It also says the architecture can deliver 4x higher energy efficiency and ingest 2x more pages per second for enterprise AI data.

Mistral AI cofounder and chief technology officer Timothée Lacroix said the system would provide “a critical performance boost” for the company’s agentic AI work and help its models maintain “coherence and speed when reasoning across massive datasets.”

The broader point is that CPU design and storage architecture are being drawn back into the centre of AI infrastructure planning. Long-context reasoning and multi-step inference place demands on memory and data access that older server layouts were not built to handle.

Power and simulation become part of deployment

NVIDIA’s DSX story also reaches into utilities, construction and facility operations. The company says energy has become the biggest bottleneck in AI infrastructure buildouts, citing more than US$300 billion in equipment backlogs and more than 200 gigawatts of projects waiting in US interconnection queues.

That is why the DSX stack includes software for power orchestration and grid interaction as well as compute planning. DSX Flex, for example, is intended to connect AI factories to grid services so they can adjust consumption dynamically and coordinate with onsite generation.

A large partner ecosystem sits around that effort, spanning design software, cooling systems, power infrastructure and digital operations. Rather than naming every participant, the more important point is that NVIDIA is treating AI factories as facility-scale systems that need to be planned across engineering, energy and IT teams from the start.

DSX Air is one of the more practical tools in that push. NVIDIA says the platform lets customers validate networking, storage, orchestration and security in a digital twin before hardware is physically installed, cutting time to first token from weeks or months to days or hours.

CoreWeave is already using DSX Air to test AI factory digital twins in the cloud, while Siam.AI and Hydra Host are using it to validate architectures and orchestration workflows before deployment. That makes simulation less of a design convenience and more of a requirement for bringing large AI facilities online without costly delays.