Ray-Ban Meta AI glasses review: Strong audio and camera undermined by unreliable AI

Ray-Ban Meta AI glasses deliver strong audio and hands-free capture, but AI features remain inconsistent and limited in real-world use.

Smart glasses occupy an awkward middle ground in consumer technology. They promise to reduce phone dependency while remaining tethered to that same device for most functions. The pitch is compelling, but the execution rarely keeps pace.

Table Of Content

- Cat-eye frame runs slightly small, but the build quality holds up

- 12 MP ultra-wide camera captures spontaneous moments but lacks stabilisation

- Open-ear speakers and a five-mic array deliver the most reliable experience

- Meta AI struggles with context and loops on permission requests

- Battery reaches eight hours under moderate use, case adds 48 more

- The verdict: Ray-Ban Meta AI glasses (Gen 2)

Meta’s second-generation collaboration with Ray-Ban targets specific pain points from the first generation. Battery life doubles from four hours to eight, addressing the most common complaint about the original. Video recording jumps from 1440×1920 resolution to 3K at 30 frames per second, a meaningful upgrade for content quality. Audio output increases by 50% in volume with double the bass response, making the open-ear speakers more practical in noisy environments. The camera remains 12 MP but gains an ultra-wide lens for a broader field of view. The five-microphone array and 32 GB of storage carry over unchanged.

Five days of testing across daily commutes and office environments reveal where these upgrades make a difference and where the product still falls short.

Cat-eye frame runs slightly small, but the build quality holds up

The model under review, Ray-Ban Meta Skyler (Gen 2), adopts a cat-eye shape in injected plastic, measuring 133 mm hinge to hinge with a 52 mm lens width, 42 mm lens height, and 150 mm temple length. At 53 grams, the frame is noticeable when first worn but becomes less intrusive over time. Build quality is solid, with no flex or creaking after five days of regular wear.

The Transitions lenses tested here respond quickly to changing light, darkening within seconds in direct sun and clearing just as quickly indoors. However, the Skyler frame runs small. For anyone accustomed to larger styles such as the Wayfarer, the proportions feel noticeably narrower. Extended wear remained comfortable during testing, but the fit will not suit everyone.

As Ray-Ban offers the same Gen 2 technology across multiple frame styles, including the Wayfarer, Headliner, and Skyler, sizing issues can be addressed by choosing a suitable model based on face shape or personal preference. All available styles are offered with sun, clear, Transitions, or polarised lenses.

The meta glasses come with an LED indicator near the hinge that lights up white when the camera is active, visible enough to meet privacy requirements but discreet in design. A single capacitive button on the right temple arm captures photos with a single press and starts video recording with a long press. The tactile feedback makes it easy to trigger without adjusting the glasses.

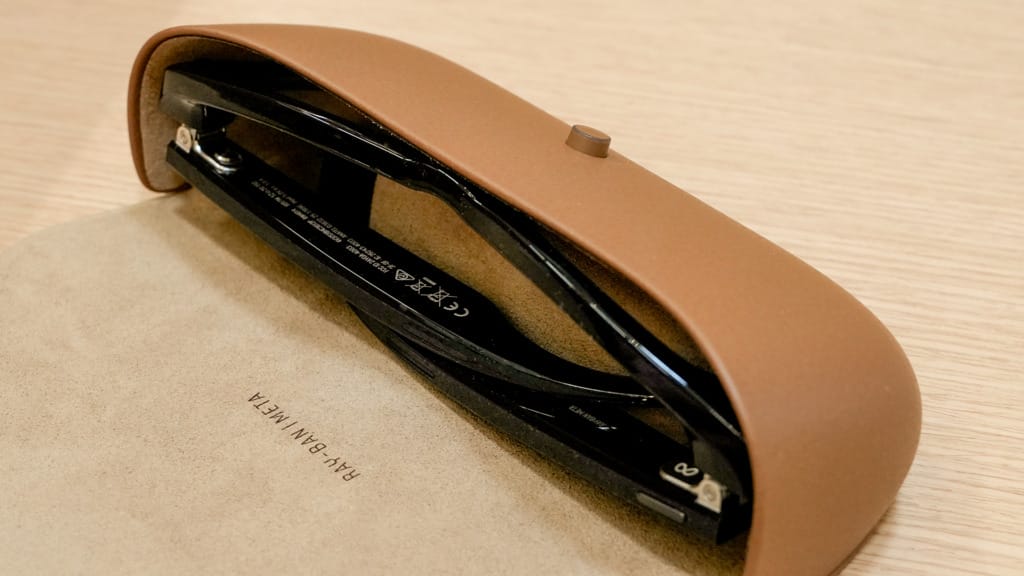

The charging case weighs 133 grams and uses a magnetic closure. Build quality matches the glasses, with a USB-C port on the bottom for charging. Oddly, a USB-C cable is not included, which introduces a small but unnecessary friction point given the otherwise considered hardware package.

12 MP ultra-wide camera captures spontaneous moments but lacks stabilisation

Photos and videos are captured in a portrait orientation by default, which aligns more naturally with social platforms such as Instagram and TikTok than with traditional landscape viewing.

The 12 MP ultra-wide sensor captures photos at 3,024 x 4,032 pixels, with detail that holds up well in good lighting and colour accuracy that is reasonable for social sharing. The ultra-wide field of view suits first-person perspectives and captures more of a scene than a standard smartphone lens. However, this is not computational photography. There is no HDR processing, no night mode, and no subject detection. The dynamic range is narrower than that of a modern smartphone. Shoot into bright light, and highlights blow out. Move indoors, and noise becomes visible quickly.

Video recording offers multiple resolutions: 720p at 120 fps, 1080p at 30 or 60 fps, and 3K Ultra HD at 30 fps. The 3K mode produces sharp footage outdoors in bright light, with strong detail and accurate colour. Indoor recording remains usable, though grain increases in dimmer areas.

The limitation is stabilisation, or the complete absence of it. Recording while walking introduces visible movement into the footage. Turning your head shows natural motion that action cameras or phones would digitally smooth out. The shake is not extreme, but it is noticeable enough that anyone expecting action-camera-level stability will be disappointed. Static shots and slow, deliberate panning hold up well. For casual, spontaneous clips shared to social media, the walking movement may be acceptable. For polished video content or longer recordings where smooth footage is essential, post-processing stabilisation is necessary.

Storage is 32 GB of onboard flash memory, enough to hold over 1,000 photos or around 100 short videos. That said, media must be offloaded regularly through the Meta AI app.

For quick social posts or documenting experiences as they happen, it works well. For planned video content such as vlogs, tutorials, or anything requiring polished output, the lack of stabilisation and computational photography becomes a more significant constraint.

Open-ear speakers and a five-mic array deliver the most reliable experience

Audio performance is where the glasses prove their value most consistently. The open-ear speakers output sound at 76.1 dB(C), 50% louder than the original Ray-Ban Stories, with double the bass response. This results in a fuller, more balanced sound than expected for a frame-integrated system. Bass is present without overwhelming the mids or highs, and vocals remain clear across typical listening volumes.

The directional design keeps sound relatively contained, too. In quiet indoor environments, people nearby may hear faint audio at maximum volume. In outdoor commutes or moderate ambient noise, leakage is minimal. Adaptive volume adjusts output automatically based on surrounding noise, ramping up outdoors and dropping indoors. The transitions are smooth and rarely require manual adjustment. The open-ear design also maintains situational awareness, making the glasses safer for outdoor use and more appropriate in shared environments.

Call quality benefits from the custom five-microphone array. Two microphones sit in each temple arm, with a fifth near the nose pad. This distributed setup captures voice clearly while suppressing background noise. Meta claims that 90% of ambient noise is blocked during calls, and testing supports this. Calls from busy streets remained intelligible. The microphone array handles wind noise better than expected, a noticeable improvement over phone speaker mode or low-end Bluetooth headsets.

Audio and call performance are the most refined aspects of the product. They work reliably, require minimal adjustment, and deliver consistent results. If the glasses were purely a hands-free audio device with a built-in camera, the experience would closely align with expectations. The problems arise when Meta AI enters the equation.

Meta AI struggles with context and loops on permission requests

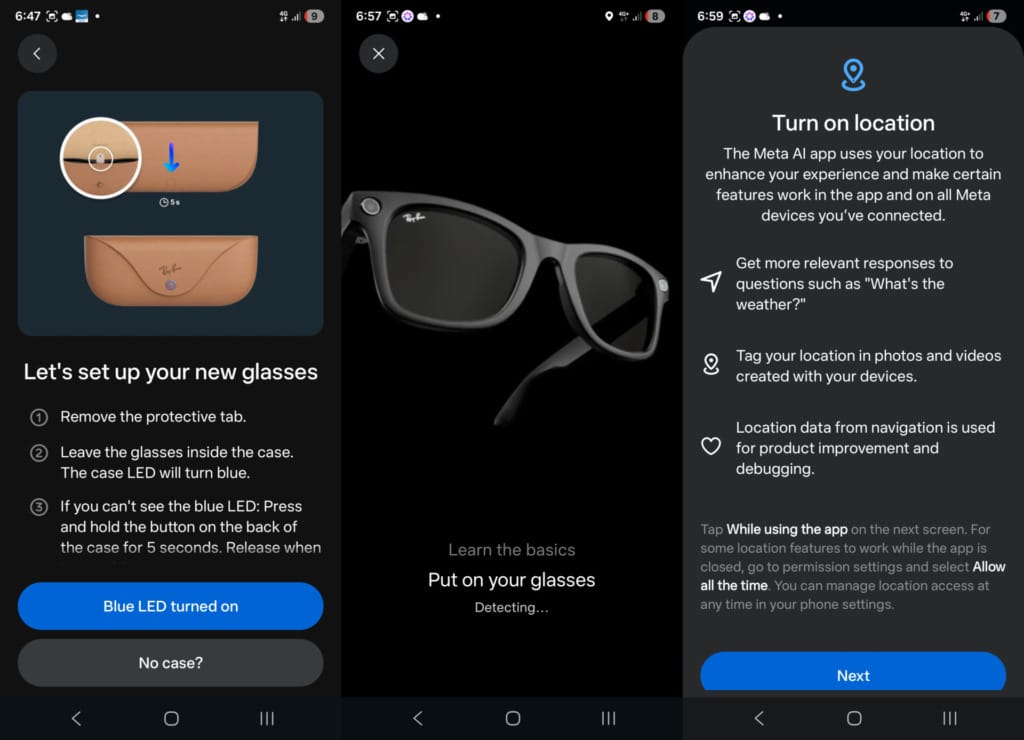

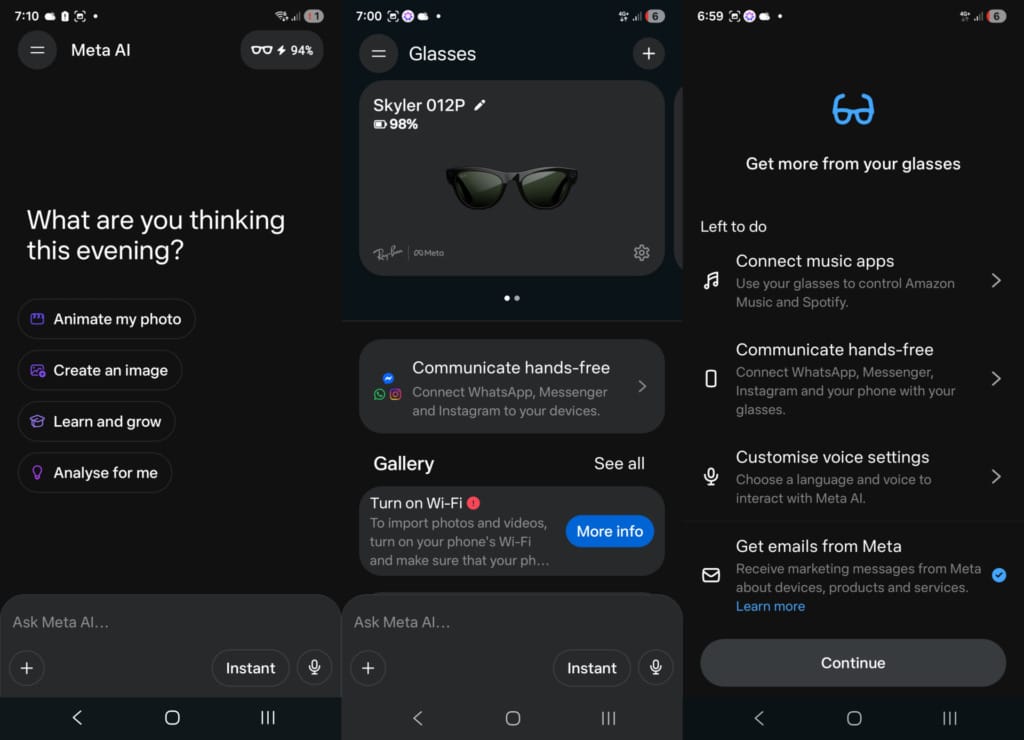

Meta positions AI as a defining feature of the second generation. The assistant is supposed to provide real-time information about landmarks, suggest recipes, remind you where you parked, and translate conversations across six languages. The implementation does not deliver on this pitch.

Activating Meta AI requires saying “Hey Meta” aloud. Responses are delivered through the speakers, which work well in quiet environments but are difficult to hear in noisy surroundings. The assistant responds to factual queries such as weather or basic trivia, but the depth and accuracy of responses lag noticeably behind ChatGPT or Google Gemini. For users familiar with those platforms, the drop in capability is jarring. Questions that prompt detailed answers elsewhere receive shorter, less helpful responses, or responses with no context.

Functional issues compound this. Location-based queries repeatedly prompted for location access during testing, even though permissions had already been granted in the app. The loop persisted across multiple attempts, effectively blocking the feature. Closing and reopening the app did not resolve it. Restarting the glasses did not resolve it. This kind of failure undermines trust entirely.

Translation is supported across English, French, Italian, Spanish, German, and Portuguese. The feature works at a basic level for simple phrases. Point the camera at a menu, ask for a translation, and the assistant reads back a rough equivalent. Accuracy is acceptable for straightforward requests.

However, the language selection also highlights a regional gap. For a product launching in Asia, the absence of Japanese, Korean, Bahasa, Thai or Tagalog limits practical utility in key markets. Meta appears to be prioritising European languages first, which is understandable given this is early in the regional rollout, but it narrows the audience who will find translation genuinely useful day to day.

Meta AI works occasionally for simple tasks, but it is not reliable enough to build workflows around. The gap between the marketing promise and actual capability is wide, and functional issues make it difficult to recommend the feature with any confidence at its current level of functionality.

Battery reaches eight hours under moderate use, case adds 48 more

Meta rates the AI glasses for up to eight hours of battery life on a single charge, with up to 48 additional hours from the fully charged case. Standby time is listed at 19 hours. Testing over five days involved music playback during commutes, a handful of photos and short videos, several calls, and occasional Meta AI interactions. After approximately four hours of this mixed use, the battery sat at 58%. The eight-hour claim appears achievable under moderate use, but recording video continuously or using AI features heavily drains faster.

The charging case becomes essential for all-day use, holding enough charge to replenish the glasses multiple times. The glasses charge quickly when docked, reaching 50% in around 20 minutes. However, relying on the case means carrying it regularly, which reduces simplicity. The case is compact enough for a jacket pocket or bag, but it is one more thing to manage.

Connectivity relies on Bluetooth 5.3 for pairing with a smartphone. Wi-Fi 6 enables firmware updates and faster media transfers. Compatibility spans iOS 15.2 and above, and Android 10 minimum. However, nearly all functionality depends on maintaining a Bluetooth connection. The Meta AI app handles media management, settings adjustments, and assistant interactions, reinforcing that the glasses are an extension of the phone rather than an independent device.

The verdict: Ray-Ban Meta AI glasses (Gen 2)

The Ray-Ban Meta AI glasses refine the hardware in ways that matter. Battery life doubles, video resolution improves to 3K, and audio output increases in volume and tonal balance. These upgrades address real limitations from the first generation and make the glasses more practical for daily wear. Audio performance is strong enough to replace earbuds for casual listening. Call quality is clear enough to handle work calls without fumbling for a phone. The camera captures moments quickly from a perspective that smartphones cannot replicate easily.

However, the software layer has not kept pace. Meta AI remains too inconsistent to justify its prominence in the product positioning. Responses lack the depth and contextual awareness of established assistants. Translation works, but lacks regional language support for Asian markets. The AI feels like a prototype rather than a finished product, which significantly undermines the value proposition.

The absence of video stabilisation is another limitation that affects daily usability. Footage shot while walking shows noticeable movement that action cameras automatically smooth out.

In markets where the AI glasses are available, they typically fall into the mid-tier eyewear category. Whether that pricing makes sense depends heavily on which features you intend to use most. For hands-free audio and spontaneous capture, the value is there. For AI assistance or polished video recording, it is not.

In short, the hardware works, but the software will need more work.