Physical AI gets an industrial playbook from NVIDIA

NVIDIA ties robotics, synthetic data, autonomous driving and edge AI into a broader physical AI stack for real-world deployment.

NVIDIA’s latest physical AI announcements are less about a single robotics launch and more about assembling the capabilities needed to move autonomous systems into real environments. Across robotics, industrial software, synthetic data, autonomous driving and edge networks, the company is outlining how simulation, training, validation and operations can work together.

Table Of Content

Physical AI poses a different operational challenge from chatbots or coding assistants. Robots, vehicles and vision systems have to function in live environments, where mistakes carry physical consequences. That raises the requirements for simulation fidelity, safety, inference speed and data quality.

At GTC, NVIDIA used that broader frame to connect Cosmos world models, Isaac simulation tools, GR00T robot models, the Physical AI Data Factory Blueprint, DRIVE Hyperion and AI-RAN infrastructure into a more unified operating model. Across those announcements, the emphasis was on execution. NVIDIA is trying to explain how physical AI moves from research and testing into production environments.

Robotics leaves the demo stage

Robotics is where NVIDIA’s approach becomes most concrete. The company is no longer treating robot intelligence as a standalone model problem. It is linking simulation tools and AI models to industrial use, where digital twins, edge inference and production-line validation carry more weight than stage demos.

That is why the industrial partnerships stand out. Companies such as ABB Robotics, FANUC, KUKA and YASKAWA are using Omniverse libraries and Isaac-based simulation to model and validate factory operations before rollout. These partnerships point to deeper integration with existing industrial environments, where robotics systems are being embedded into production lines, warehouses and infrastructure.

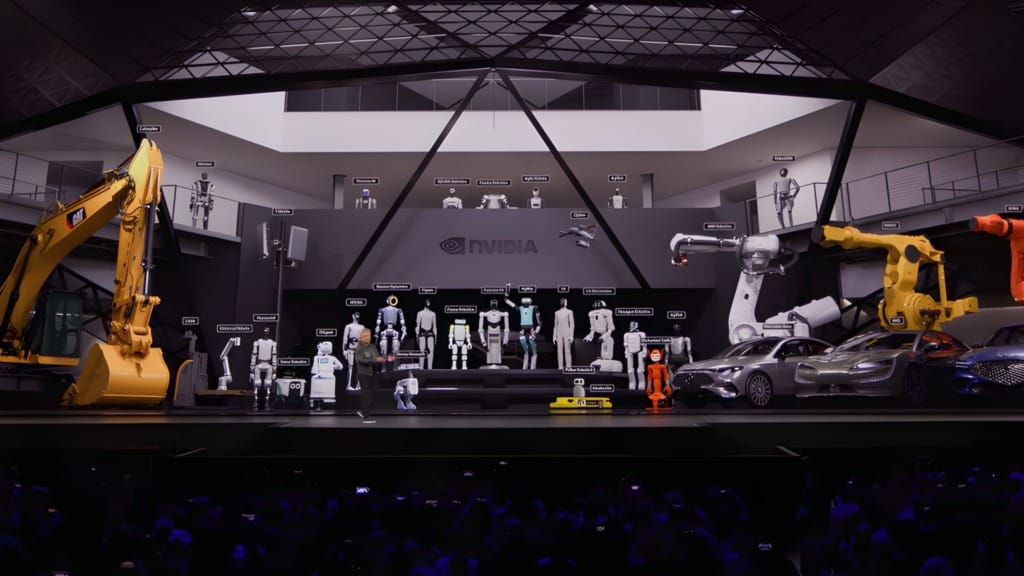

“Physical AI has arrived — every industrial company will become a robotics company,” said Jensen Huang, founder and CEO of NVIDIA. “NVIDIA’s full-stack platform — spanning computing, open models and software frameworks — is the foundation for the robotics industry, uniting a worldwide ecosystem to build the intelligent machines that will power the next generation of factories, logistics, transportation and infrastructure.”

Humanoids remain the most visible part of that push, but they are only one expression of it. NVIDIA highlighted companies including Figure, Boston Dynamics and NEURA Robotics as part of its humanoid ecosystem, while also pointing to industrial uses of Isaac GR00T models. More notable is the emergence of a clearer development path. Isaac Lab 3.0, commercial licensing for GR00T N1.7 and the preview of GR00T N2 point to an effort to standardise how robot developers train, evaluate and run systems across different machine types.

Data becomes the real constraint

If robotics is the visible layer, data is the harder constraint underneath it. NVIDIA’s Physical AI Data Factory Blueprint is effectively an attempt to turn data generation into a repeatable process for robotics, vision AI and autonomous vehicles.

The blueprint combines Cosmos models with automation and coding agents to expand limited real-world datasets into broader training and evaluation workflows. That includes generating edge cases and long-tail scenarios that are difficult or expensive to capture in live settings. In practice, NVIDIA is framing physical AI as a workflow problem as much as a model problem.

“Physical AI is the next frontier of the AI revolution, where success depends on the ability to generate massive amounts of data,” said Rev Lebaredian, vice president of Omniverse and simulation technologies at NVIDIA. “Together with cloud leaders, we’re providing a new kind of agentic engine that transforms compute into the high-quality data required to bring the next generation of autonomous systems and robots to life. In this new era, compute is data.”

That also explains the role of cloud partners such as Microsoft Azure and Nebius. NVIDIA wants large pools of compute to function as data production infrastructure, not simply as model training capacity. Early adopters across robotics, video analytics and autonomous driving point to rising demand for data curation, augmentation and evaluation, alongside model development itself.

Cars and networks join the stack

NVIDIA’s automotive and telecom announcements extend the same logic into larger, distributed environments. In automotive, the company is positioning DRIVE Hyperion, Halos OS and related software as a shared base for training, simulation, validation and operation across advanced driver assistance and robotaxi programmes.

Partnerships with Hyundai Motor Group, Kia and a wider set of mobility players reflect NVIDIA’s push to give automakers and transport platforms a common foundation instead of separate development tracks. That does not guarantee large-scale adoption, but it clarifies where the company believes standardisation can reduce engineering complexity.

The AI-RAN work with T-Mobile and Nokia serves a similar function on the network side. Here, the focus is edge infrastructure that can support vision agents, cameras and robotic systems operating across wide-area environments. Rather than treating 5G, edge inference and physical AI as separate categories, NVIDIA is bringing them into a single operating framework.

The ecosystem is doing the real work

The most convincing part of NVIDIA’s physical AI story is the breadth of the ecosystem around it. Robot makers, industrial software vendors, cloud providers, healthcare firms, logistics operators and telecom companies are all being drawn into the same workflow. That breadth is necessary because physical AI adoption is fragmented by nature.

Examples from manufacturing, warehousing and healthcare illustrate how simulation is being applied to narrower operational problems, from validating production systems to training autonomous forklifts and exploring surgical robotics. These use cases are more useful than the humanoid headlines because they point to where physical AI may deliver measurable value first.

That does not mean physical AI is ready for large-scale use across every sector. What it does suggest is that the next phase is being organised around simulation, synthetic data, validation and operational workflows, rather than robotics demos alone.

NVIDIA’s message at GTC is that physical AI should be treated as a production architecture spanning factories, vehicles, hospitals and edge networks. The more pressing question now is not whether the models are improving, but whether the surrounding workflow is becoming usable enough for real-world adoption.