Lenovo: Why most enterprise AI pilots never make it to production

Enterprise AI is moving beyond experimentation, but production adoption remains uneven due to operational and governance constraints.

Across Asia, the enterprise AI gold rush has hit a familiar bottleneck: the pilot trap. While most large organisations now run multiple pilots simultaneously, testing everything from supply chain optimisation to employee assistance, a stark pattern has emerged. Only half of these pilots ever make it into production, and of those that do, a mere 50% are actually adopted by their intended users.

Table Of Content

The transition gap is not primarily technical. According to Linda Yao, Vice President and General Manager for Hybrid Cloud & AI Solutions at Lenovo, the deeper issue is that many organisations underestimate what it takes to operationalise pilots once they move beyond controlled testing and into environments that require production-grade foundations.

Where pilots tend to break down

The Lenovo CIO Playbook, which tracks enterprise AI adoption patterns, highlights the scale of the issue. While organisations run dozens of pilots, the majority fail to transition successfully, with the breakdown usually occurring around coordination, ownership, and readiness for real-world deployment. These are the pressures that tend to surface once a pilot moves beyond technical validation and into live business use.

First, pilots are usually built to prove that something works, not to ensure it runs reliably in production. They operate in controlled environments with cleaner data, fewer dependencies, and narrower workflows. That makes them effective for testing feasibility, but less useful as indicators of real-world performance. Once deployed, the same system has to handle fragmented data, legacy infrastructure, and varied user behaviour. Reliability, integration, and accountability become harder to maintain.

Second, AI has expanded beyond IT departments, making scale an organisational challenge rather than a purely technical one. With nearly half of initiatives funded by business units, deployment is tied more closely to operational priorities and measurable outcomes. This brings AI closer to real use cases, but also increases coordination demands. What begins as a contained proof-of-concept can require alignment across IT, operations, compliance, and business teams, turning scale into a question of workflow integration and ownership.

Third, governance remains underdeveloped where it matters most. Only one-third of organisations have established AI policies, while nearly half are still building them. In pilot stages, weak governance can be overlooked because the system is still operating within narrow boundaries. In production, however, it becomes a direct constraint. Enterprises need clear rules on decision boundaries, accountability, and auditability before AI can be trusted in live environments, particularly in regulated or high-risk settings where failures carry operational and compliance risks.

This gap between experimentation and execution comes down to how early organisations anchor AI initiatives to business outcomes and operational reality. As Linda Yao puts it, “AI implementations must show clear alignment to business value. Defined ROI and integration into existing workflows help move AI pilots beyond being isolated successes. Organisations that succeed tend to embed governance, data discipline, and change management early, ensuring that pilots are designed with production realities in mind rather than treated as standalone experiments.” The point is that production readiness is shaped well before deployment, through decisions about workflow fit, ownership, and operational discipline.

What frameworks can and can’t accelerate

Faced with repeated deployment failures, organisations have turned to structured frameworks that standardise parts of the process, based on the view that recurring problems are best addressed through reusable solutions.

Lenovo’s Hybrid AI Advantage takes this approach, combining governance, infrastructure, and lifecycle management into a unified framework. Its value becomes evident early in execution, particularly when moving from discovery to production-ready design.

Yao points to the work with Yili Group, Asia’s largest dairy company, as an example of how structured frameworks can speed up the early stages of execution. The engagement began with a discovery phase that mapped business priorities to specific decision points across the supply chain, keeping the focus on planning accuracy, inventory management, and logistics coordination rather than on the technology itself.

From there, standardised components established the data and infrastructure foundation, integrating multiple systems into a consistent environment. Pre-validated patterns for orchestration and lifecycle management accelerated development, allowing the team to move quickly from concept to a functioning multi-agent system.

But standardisation has limits, too, as the system still had to be adapted to Yili’s operations. The supply chain super agent was built around proprietary data, existing systems, and decision-making processes, with models tuned to match how supply chain trade-offs are handled in practice.

The framework accelerated development once enterprise-specific requirements were defined around actual workflows and outcome metrics, allowing the team to move faster without sacrificing the need for company-specific design choices.

Frameworks can speed up the development of repeatable components, but they do not eliminate the need for enterprise-specific work. The risk is in treating them as complete solutions rather than accelerators, especially when much of the real complexity still sits in proprietary data, internal processes, and operational trade-offs.

The governance gate for autonomous agents

As organisations explore agentic AI – systems that act autonomously rather than simply assist – the stakes rise because performance alone is no longer enough. Enterprises need to understand what actions agents can take, under what conditions, and how those actions are monitored in live environments.

The governance gap becomes critical at this stage. With only one-third of organisations having comprehensive AI frameworks and 47% still developing policies, most are not yet positioned to deploy agents at scale or to give autonomous systems meaningful operational authority.

Yao frames the requirement in operational terms. “Before agents can operate in live environments, enterprises need clear policy frameworks defining decision boundaries, accountability, and auditability. This includes what agents are allowed to do, under what conditions, and with what level of autonomy.” This shifts the focus from capability to accountability, where organisations must define ownership of outcomes so that actions are traceable and decisions can be reviewed across the full lifecycle.

Lenovo’s support for NVIDIA OpenShell and NemoClaw shows how this control can be implemented, bringing sandboxed execution, privacy controls, and enterprise-level monitoring into a single operational layer. The broader point is that agentic AI adoption depends as much on governance maturity as on system capability, because autonomy becomes harder to justify when oversight, traceability, and policy enforcement are still immature.

Drawing the automation boundary

Even with governance frameworks in place, organisations still face a more granular question around which AI-driven actions can be automated safely and which require human approval. Yao takes a pragmatic view, noting that “Actions that are well-defined, repeatable, and operate within established policy frameworks are traditionally the most suitable for automation. These are typically areas where outcomes are predictable and can be measured and audited.” This points to a broader pattern where automation works best in controlled, rules-based environments.

Many enterprise decisions, however, involve context, judgment, and accountability, which makes full automation harder to justify. In these cases, oversight remains necessary, whether through human review or tightly defined agentic guardrails, so that decisions can be interpreted and challenged where needed.

Most organisations adopt a hybrid approach, automating within clearly defined boundaries while retaining human-in-the-loop controls for higher-risk or less predictable scenarios. Over time, the scope can expand as governance matures and confidence builds, but the starting point is usually selective automation rather than full autonomy.

These requirements are easier to meet when control, monitoring, and policy enforcement are built into the operating environment from the outset, so that decisions remain observable, auditable, and accountable as automation expands.

Orchestration at scale

Once in production, the challenge shifts from model performance to orchestration across data sources, systems, and workflows. FIFA AI Pro, which Lenovo built for performance analysis, illustrates what this looks like at scale, processing petabytes of historical and live football data, analysing thousands of performance metrics, and coordinating multiple agents to deliver insights to coaches, players, and analysts within seconds during live matches.

“The challenge is not any single component, but how data, models, and agents interact across hybrid infrastructure while still producing consistent, reliable outputs under time pressure,” Yao explains. “With no margin for ambiguity, the system must operate with precision across every layer.”

Three areas become critical at this scale. The first is lifecycle management, where organisations must consistently manage the deployment, updates, monitoring, and eventual retirement of multiple agents, because gaps at any stage can quickly introduce fragmentation, reduce reliability, and make systems harder to maintain over time.

The second is designing for different users and contexts, since analysts, coaches, and players require different types of insights, often under varying time constraints. Delivering outputs that remain relevant without becoming generic depends on how well agents interpret intent, prioritise information, and adapt responses to each role.

The third is trust and control, particularly when agents operate across diverse data sources and environments. Maintaining consistent governance, privacy, and compliance becomes more complex as systems span regions with different regulatory requirements, making it harder to enforce standards and ensure that outputs remain reliable and accountable.

This kind of orchestration depends on an architecture that can support scale without losing performance, consistency, or control across multiple agents and data environments, because once systems begin operating across roles, workflows, and live data streams, those qualities become operational requirements rather than technical preferences.

The underestimated complexity of hybrid deployment

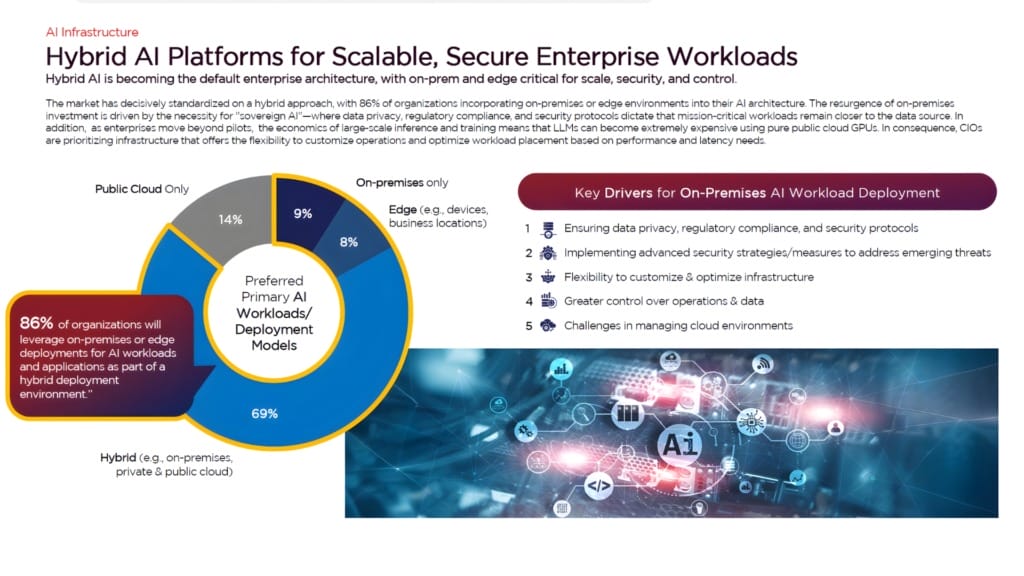

Hybrid AI, combining on-device, edge, and cloud environments, has become the default deployment model. While 86% of organisations plan to incorporate on-premises or edge into their AI strategy, only about 31% have established comprehensive governance frameworks, and this gap becomes visible quickly once systems move into live operations.

Workload placement is often the first pressure point, as different AI tasks have distinct requirements. In manufacturing environments, real-time quality inspection needs to run at the edge for low latency, while model training remains centralised and employee copilots run on AI PCs, creating a mix of workloads that must be coordinated across environments.

As Yao notes, “In situations without clear governance, teams often default to the cloud, driving up cost and latency, or distribute workloads inefficiently, leading to inconsistent performance.” The result is uneven system behaviour, where cost, speed, and reliability are not optimised together, and where infrastructure choices are driven more by convenience or uncertainty than by workload fit.

Energy and performance constraints add another layer of complexity, particularly as continuous AI inferencing places sustained pressure on distributed infrastructure. General-purpose systems often struggle to maintain efficiency at this level, which is why purpose-built approaches, such as Lenovo Neptune liquid cooling, are becoming increasingly relevant for large-scale deployments.

The operating model, however, tends to be the deeper constraint. Hybrid AI cuts across IT, operations, and business teams, but ownership is often fragmented, and even with the right architecture in place, adoption can stall if workflows are not redesigned to support how AI systems are actually used.

Yao highlights this in practical terms by pointing to digital workplace deployments, where technology rollout alone is not enough. The full value depends on how well organisations redesign processes, support adoption, and adapt user behaviour alongside the system itself. This reinforces the point that deployment alone is insufficient without corresponding changes in how teams work.

Hybrid AI delivers value when workload strategy, infrastructure, and operating models are managed together rather than in isolation, because weaknesses in any one of those areas can slow adoption even when the rest of the system is in place.

Designing for production from the start

Organisations that successfully scale AI tend to follow a consistent approach, designing pilots with production realities in mind from the outset by embedding governance early, aligning AI initiatives with business workflows, and investing in operational foundations before deployment.

The focus, therefore, shifts from proving that a model works to designing systems that operate reliably within existing constraints. That means addressing data quality, integration complexity, and organisational readiness alongside technical performance, so that deployment is shaped by the realities of existing workflows rather than by the assumptions of a controlled pilot.

Without this, organisations risk building a growing backlog of promising pilots that fail to deliver sustained business impact. The gap between experimentation and execution does not close on its own, and narrowing it requires treating model capability, infrastructure, and operating models as interconnected parts of a single system.

For enterprises looking to scale AI in a meaningful way, production readiness has to be built in from day one rather than retrofitted after a pilot succeeds.