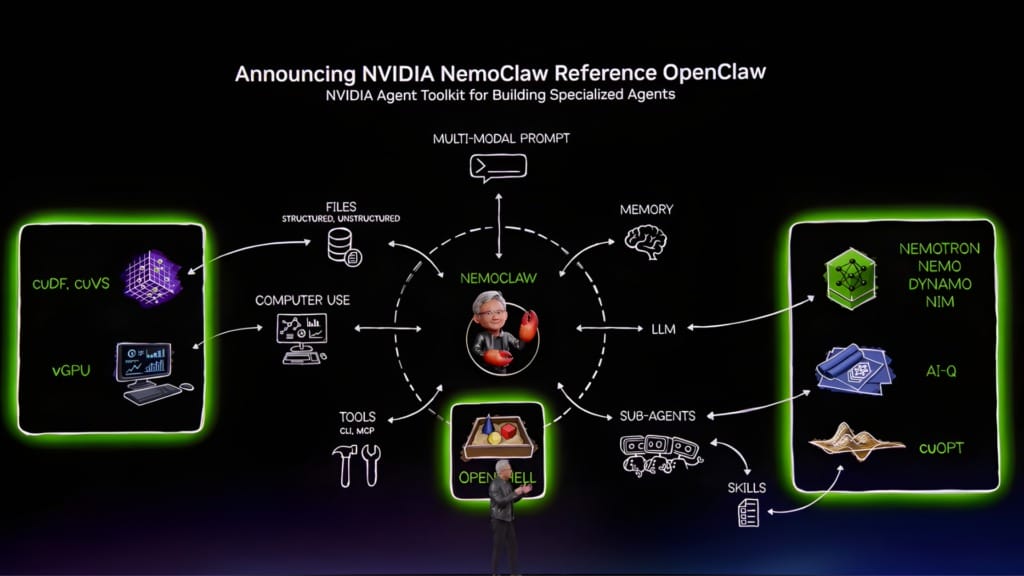

NVIDIA targets enterprise software with a “full-stack” agent architecture

NVIDIA connects models, runtimes and orchestration into a "full-stack" agent architecture for enterprise workflows.

NVIDIA is extending its role in AI beyond infrastructure into the software layer, outlining a “full-stack” agent architecture that connects models, runtimes, orchestration and enterprise applications. The company’s latest announcements describe a more integrated system, where AI operates across workflows rather than sitting behind individual prompts.

Table Of Content

At the centre is the NVIDIA Agent Toolkit, which brings together open models, agent frameworks and runtime environments into a unified development stack. It provides a way for enterprises to build systems that can interpret tasks, access relevant data and carry out actions within defined constraints, without requiring constant user direction.

From models to autonomous execution

The Agent Toolkit combines components such as Nemotron models, the AI-Q agent blueprint and the OpenShell runtime. These are designed to work as a coordinated layer, enabling agents to interact with enterprise data, select appropriate tools and produce outputs aligned to ongoing tasks.

This changes how AI is applied within enterprise systems. Instead of responding to discrete queries, agents operate within longer processes, adjusting their behaviour based on context, available data and system constraints. In practice, this could involve retrieving internal data, selecting an analysis approach and generating outputs without requiring step-by-step prompts.

“Claude Code and OpenClaw have sparked the agent inflection point — extending AI beyond generation and reasoning into action,” said Jensen Huang, founder and CEO of NVIDIA. “Employees will be supercharged by teams of frontier, specialized and custom-built agents they deploy and manage. The enterprise software industry will evolve into specialized agentic platforms, and the IT industry is on the brink of its next great expansion.”

This direction introduces new expectations around traceability and reliability. NVIDIA has embedded evaluation mechanisms within AI-Q so enterprises can track how outputs are generated, which becomes more important as systems take on operational roles.

The development model also changes. Instead of centring on a single model, teams are assembling multiple components into workflows, where reasoning, data access and execution are handled across different layers.

Runtime control becomes non-negotiable

As agents take on more responsibility, the runtime layer becomes a primary control point. OpenShell defines how agents access data, interact with networks and connect to external services, enforcing policy-based guardrails around security and privacy.

This places governance directly within the execution environment. Rather than relying on controls applied after the fact, the system defines what agents can and cannot do as part of how they operate.

NemoClaw extends this structure into the OpenClaw ecosystem by packaging models and runtime components into a single deployment layer. It enables agents to run across local and cloud environments, combining open models with externally hosted systems through controlled routing.

“OpenClaw opened the next frontier of AI to everyone and became the fastest-growing open source project in history,” said Jensen Huang. “Mac and Windows are the operating systems for the personal computer. OpenClaw is the operating system for personal AI. This is the moment the industry has been waiting for — the beginning of a new renaissance in software.”

These systems are designed for continuous operation. Agents run over extended periods, interacting with multiple systems and completing tasks that unfold over time, which introduces new requirements around persistent compute and system stability.

Agents move inside enterprise workflows

NVIDIA’s approach depends on integration with existing enterprise software. Partnerships across productivity, design and business platforms show how agents are being embedded directly into workflows rather than positioned as separate tools.

Within these environments, agents can monitor processes, trigger actions and coordinate across systems. Their role becomes operational, supporting tasks that previously required manual coordination between teams and software.

Adobe’s collaboration with NVIDIA illustrates how this plays out in practice. By combining agent frameworks with creative and marketing workflows, the integration enables longer-running processes that connect content creation, campaign execution and asset management.

“Content creation is exploding, and our partnership with NVIDIA is grounded in a shared vision to reinvent creative and marketing workflows with the power of AI,” said Shantanu Narayen, chair and CEO of Adobe. “As AI transforms how marketing teams and media and entertainment studios work, Adobe and NVIDIA will bring together our Firefly models, CUDA libraries into our applications, 3D digital twins for marketing, and Agent Toolkit and Nemotron to our agentic frameworks to deliver high-quality, controllable and enterprise-grade AI workflows of the future.”

A similar approach is emerging in enterprise platforms such as Salesforce, where agents are integrated into service and sales workflows and interact with systems such as Slack as a conversational interface. Across these environments, agents are shaped around specific functions, from engineering design to customer operations, creating a specialised layer of automation embedded within enterprise software.

Orchestration defines cost and performance

As agent systems expand, orchestration becomes a key factor in performance and cost. NVIDIA Dynamo 1.0 introduces a distributed inference layer that manages GPU and memory resources across workloads, coordinating how tasks are executed.

The system routes requests, allocates memory and balances workloads based on demand. This is particularly relevant for agent workflows, which involve multiple steps, variable workloads and sustained interaction with data.

“Inference is the engine of intelligence, powering every query, every agent and every application,” said Jensen Huang. “With NVIDIA Dynamo, we’ve created the first-ever ‘operating system’ for AI factories. The rapid adoption across our ecosystem shows this next wave of agentic AI is here, and NVIDIA is powering it at global scale.”

The platform is already being integrated by cloud providers such as Amazon Web Services, Microsoft Azure and Google Cloud, as well as AI-native companies including Cursor and Perplexity. These integrations highlight how orchestration is becoming part of the broader stack rather than a separate optimisation layer.

Performance at this level directly affects how viable these systems are in production. Improvements in throughput and cost efficiency determine whether agents can operate continuously within enterprise environments.

Enterprise software becomes system-led

The result is a change in how enterprise software is structured. Systems are evolving into environments where agents operate across layers, from accessing data to executing tasks, rather than being confined to individual applications.

This introduces new challenges around coordination and control. Enterprises must manage how multiple agents interact, how decisions are governed and how systems behave under continuous operation.

At the same time, integration into existing platforms suggests that adoption is moving closer to core business functions. Agents are becoming part of how work is carried out, embedded within workflows rather than layered on top.

Over time, this reshapes the role of software itself. Enterprise systems begin to resemble coordinated networks of processes, where tasks are carried out through interactions between agents, data and infrastructure, rather than through direct user input alone.