NVIDIA’s “5-layer cake” shows how AI is becoming a full-stack industry

NVIDIA’s “5-layer cake” shows how AI is shifting from chips to full-stack systems, where power, networking, and software determine real-world performance.

For years, the AI race was seen as companies building faster chips, packing more of them into servers, and pushing for longer training runs. That story still makes sense, but it leaves out what is now shaping the industry.

Table Of Content

Jensen Huang’s “5-layer cake” is useful because it clarifies how AI is now a full-stack industry. While chips remain central, value comes from the entire system, including power, cooling, data centre design, networking, software, and applications. These elements, together, determine whether AI infrastructure adds value or becomes an expensive bottleneck.

The recent NVIDIA GTC, held in March 2026, helped put that idea into context. The event itself was packed with announcements, but the more interesting point sat underneath them. NVIDIA was showing how each part of its portfolio fits into one machine.

The stack is the product

The “5-layer cake” is a simple way to explain AI architecture. Huang did not mean that every layer matters equally, but he was showing that AI infrastructure only really works when the whole system is built to work together.

NVIDIA is transitioning from a component vendor to a systems provider. Its goal is to control the entire production environment, linking power and cooling requirements directly to compute and networking performance.

This also explains why the conversation around AI has moved towards inference. Training remains important, especially for frontier models, but the commercial pressure now sits elsewhere as well. AI has to keep producing output, whether that means tokens, responses, simulations, or autonomous actions. The industry is moving from training models to running AI at scale.

Power and infrastructure come first

With this concept, NVIDIA also makes a practical point that AI starts with physical infrastructure. That sounds obvious, yet much of the market still speaks about AI as if it begins with model architecture or chip performance.

At GTC 2026, NVIDIA used DSX, its data centre simulation platform, to push a different view. Operators can build digital twins of facilities to model thermal behaviour, airflow, and power requirements before the hardware is even deployed. That is a practical response to a very real problem. AI data centres are becoming harder to build, harder to cool, and far more demanding on power systems.

This is where the “cake” moves from a metaphor to something more practical. Land, power, and facility design are not background issues. They decide what can be built, how fast it can be deployed, and what the running costs will look like. A data centre built for older workloads will struggle if it is suddenly asked to support dense AI racks at much higher power levels.

This broader approach impacts the entire industry. Bottlenecks can appear at any layer, from chips to utilities to cooling systems, and even in design. A key takeaway is that solving AI infrastructure challenges requires attention to all layers, not just chips.

Faster chips still need the rest of the system

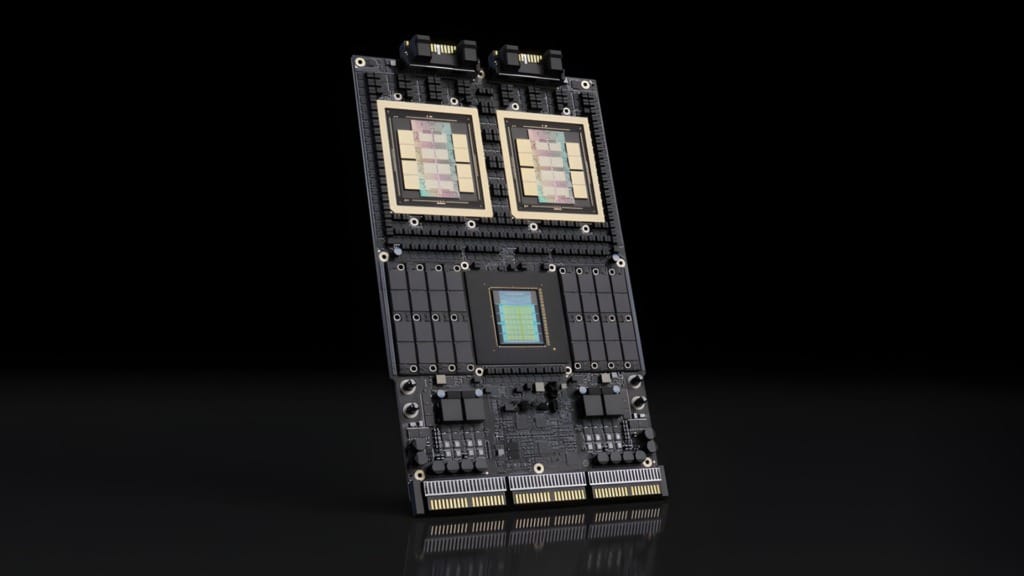

That said, the chip layer still remains the focus, and GTC 2026 gave NVIDIA a clear headline here with the Vera Rubin platform. Positioned as the successor to Blackwell, it pairs the Vera CPU with the Rubin GPU for more demanding reasoning and agentic workloads.

That part of the story is much easier to understand. Faster computing still matters, especially for enterprises facing rising inference loads and the need for higher throughput, lower latency, and greater efficiency. NVIDIA also used the event to highlight the scale of performance gains it believes the market can expect as the stack improves.

Still, the point is not about one processor beating another in isolation. A faster chip does not solve data movement, orchestration, security, or deployment complexity. AI infrastructure succeeds when the layers around it keep up. When they do not, the hardware simply waits for the rest of the system to catch up, thus creating a bottleneck.

This is why focusing only on chips misses the real point. NVIDIA wants customers to think about system-wide production capacity—how much useful output the full stack delivers, how reliably, and at what cost.

Networking has become a bigger part of the story

Networking used to sit on the sidelines in mainstream AI coverage. It now deserves much more attention, and NVIDIA knows it.

At the event, the company described networking as the backplane of the modern AI system. That was not just how it was framed on stage. It reflected a practical truth about large-scale AI workloads. Training and inference both fall apart when data cannot move quickly enough between processors, memory, and systems.

The Kyber rack architecture was one of the best examples. NVIDIA presented it as a way to connect up to 576 GPUs into a single optical scale-up domain. The technical details will appeal to infrastructure specialists, but the broader message is simpler. AI performance depends on coordination across the machine, not just raw speed at the chip level.

This is also why NVIDIA has spent so much time building out NVLink, Spectrum-X, and related networking technologies, tightening its grip on the parts of the system that customers cannot afford to ignore once deployments get large enough.

Software is where the grip gets tighter

The hardware matters, but the software layer is just as critical.

CUDA remains one of NVIDIA’s key advantages. It is deeply familiar to developers, widely supported, and built on years of ecosystem work across libraries, frameworks, and tools. Much of today’s AI software already runs well on CUDA, reducing the rework needed to get models into production. That gives NVIDIA a practical head start because rivals are not just competing on hardware performance, but also on how easily developers can build, optimise, and deploy on their platforms.

GTC 2026 pushed that argument further. OpenClaw was introduced as an open-source operating system for AI agents, while NemoClaw was presented as the enterprise-ready version for companies that want tighter data controls and private deployment. In other words, NVIDIA is working to make the agentic layer part of its wider stack rather than leaving it open for others to define.

That is smart for two reasons. First, it makes the company’s software story feel more complete. Second, it raises switching costs. Customers who build on NVIDIA’s tools, deployment frameworks, and runtime environments are not merely choosing hardware. They are building workflows, internal processes, and products around one vendor’s logic.

This does not mean rivals cannot compete. However, it does mean that the fight is much harder than benchmarking one GPU against another.

This is where everything comes together in reality

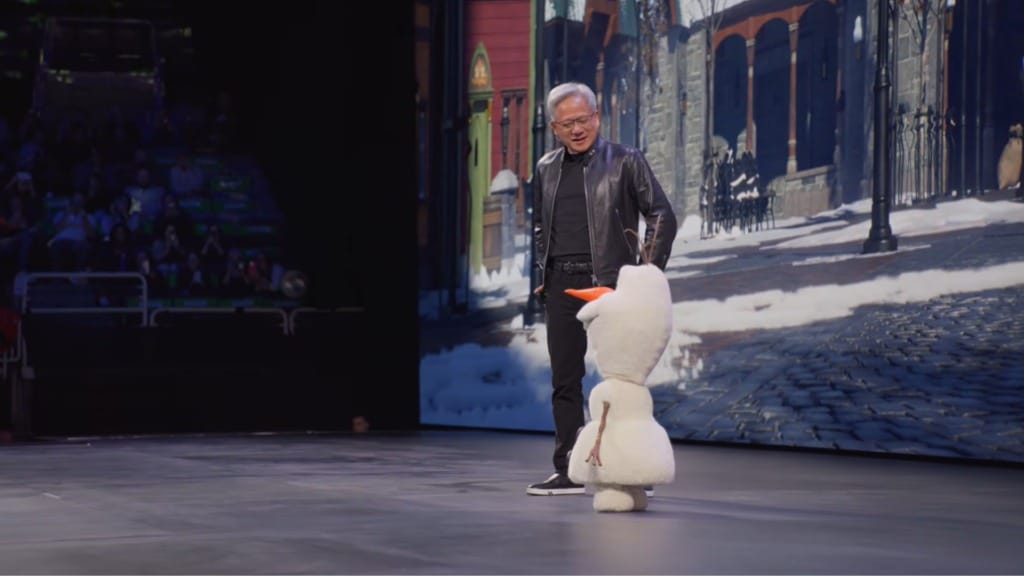

The application layer delivered some of NVIDIA’s most memorable demos at GTC 2026. Disney’s Olaf robot, trained in Omniverse using the Newton physics engine, was the sort of example built for a big stage. It turned a complex stack into something people could see.

Foxconn served a different purpose. Its use of digital twins to simulate production environments before changes are made on the factory floor showed how NVIDIA wants the “cake” to land in the real world. This extends beyond research systems and model demos to include the use of simulation, automation, and AI tooling within large industrial operations.

This is important because it proves whether the other layers were worth building in the first place. Power, chips, networking, and software can all look impressive on slides. Enterprises eventually want to know what these systems help them do, whether that comes down to planning factories, running agents, or speeding up design and operations work.

What NVIDIA is really selling

At its core, the “5-layer cake” represents NVIDIA’s effort to define AI infrastructure end-to-end. This integrated approach offers customers efficiency, speed, and simplicity by lowering friction and streamlining procurement with a coherent stack.

At the same time, there is another side to that. The deeper NVIDIA reaches into networking, software, simulation, and agent platforms, the more dependent customers may become on its roadmap and ecosystem. Once the stack is bought as a whole, walking away becomes much harder.

Huang’s metaphor serves as a roadmap for the company’s future. By defining the industry as a five-layer stack, NVIDIA is attempting to own the entire value chain. The company wants AI to be understood as a layered production system, because that is exactly the kind of system it is trying to own.