ChatGPT introduces a trusted contact feature for users at risk of self-harm

OpenAI adds Trusted Contact to ChatGPT to alert friends if users are judged at serious risk of self-harm.

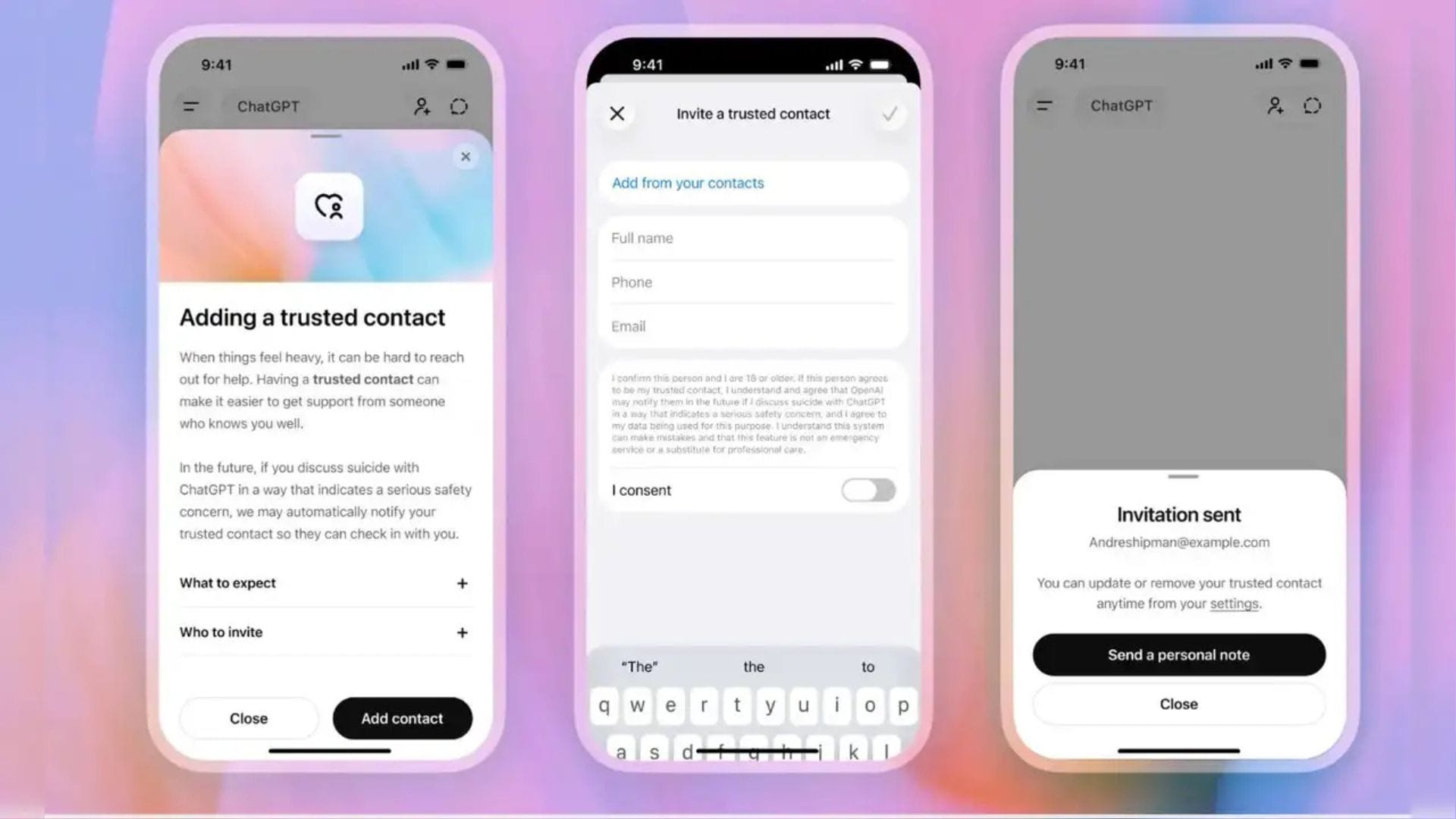

OpenAI has launched a new safety feature for ChatGPT that allows users to nominate a trusted person who may be contacted if the system detects a serious risk of self-harm. The feature, called Trusted Contact, is part of the company’s broader effort to improve safeguards for vulnerable users as more people turn to artificial intelligence tools for emotional support and mental health conversations.

Table Of Content

The company said the option is available to users aged 18 or older and builds on ChatGPT’s existing parental control settings. OpenAI stated that the feature is designed to provide an additional layer of support in situations where users may be experiencing severe emotional distress or discussing suicide during conversations with the chatbot.

OpenAI previously told the BBC that more than one million of ChatGPT’s 800 million weekly users express suicidal thoughts in conversations with the platform. The growing use of AI chatbots as a form of digital therapy has raised concerns among mental health experts, regulators, and campaign groups about technology companies’ responsibilities to protect vulnerable users.

OpenAI responds to concerns over chatbot safety

The introduction of Trusted Contact follows mounting scrutiny over how AI systems respond to people experiencing mental health crises. In recent years, OpenAI has faced criticism over cases in which ChatGPT allegedly failed to provide safe responses to vulnerable users.

Last year, the company was named in a wrongful death lawsuit linked to the suicide of a teenager. The legal complaint alleged that the teenager had discussed four previous suicide attempts with ChatGPT before later receiving assistance in planning his death. OpenAI has not publicly commented in detail on the claims but has said it continues to improve safety measures within the chatbot.

Concerns intensified after a BBC investigation published in November 2025 reported that ChatGPT had, in at least one case, advised a user on how to end her life. Following the report, OpenAI said it had strengthened the way the chatbot handles conversations involving self-harm, suicide and emotional distress.

The company said the Trusted Contact feature is intended to encourage users to seek help from people they know personally before a crisis escalates. OpenAI described the system as a support mechanism rather than an emergency response service, adding that it is not designed to replace professional medical care or crisis intervention.

How the trusted contact system works

Users who choose to enable the feature can nominate one adult contact through the ChatGPT settings menu. The nominated person will receive an invitation that must be accepted within one week. If the invitation expires or is declined, users can choose another contact instead.

According to OpenAI, ChatGPT will first warn users if it detects what it describes as a serious risk of self-harm. The chatbot will encourage users to contact their trusted person directly and may suggest ways to begin the conversation.

The company stressed that the process is not fully automated. OpenAI said a “small team of specially trained people” will review flagged conversations before any notification is sent. Only cases judged to involve a significant risk of self-harm will trigger an alert to the nominated contact.

If approved, the trusted person may receive an email, text message or in-app notification informing them that the user could be going through a difficult period. The message will encourage the contact to check in with the individual and offer support where possible.

OpenAI said the trusted contact will not receive copies or transcripts of conversations held with ChatGPT. The company stated that the restriction is intended to protect user privacy while still enabling intervention in high-risk situations.

Privacy and human oversight remain central

OpenAI said every notification sent through the Trusted Contact system will be reviewed by trained staff before delivery. The company acknowledged that AI systems cannot always accurately determine a person’s emotional state and warned that alerts may not always reflect exactly what users are experiencing.

“[The user] may be going through a difficult time,” the notification will state. “As their Trusted Contact, we encourage you to check in with them.”

The company added: “While no system is perfect, and a notification to a Trusted Contact may not always reflect exactly what someone is experiencing, every notification undergoes trained human review before it is sent, and we strive to review these safety notifications in under one hour.”

The rollout comes as technology companies face increasing pressure to demonstrate stronger safeguards around AI products, particularly as conversational chatbots become more widely used for companionship, advice and emotional support. Mental health organisations have repeatedly warned that AI systems can provide misleading, harmful or inconsistent responses if not carefully monitored.

Industry experts say OpenAI’s latest move reflects growing recognition within the technology sector that AI companies may need to take a more active role in user safety as chatbots become integrated into everyday life. However, questions remain over how effective such systems can be in identifying genuine emergencies without invading privacy or producing false alarms.