NVIDIA’s Physical AI strategy bets on vertical integration the industry may not accept

At GTC 2026, NVIDIA outlined plans to become the platform owner for Physical AI. The bet assumes the industry will accept integration on a scale it has historically resisted.

NVIDIA is attempting a fundamental strategic realignment. At the recent NVIDIA GTC 2026, the company outlined plans to move beyond selling chips and become the platform owner for Physical AI—systems that operate in and reason about the three-dimensional world. The ambition spans robotics, autonomous vehicles, and telecommunications infrastructure. The binding element is vertical integration on a scale that the technology industry has rarely accepted. NVIDIA wants to control the foundation models, the inference operating system, the silicon architecture, and the reference designs for deployment. The bet assumes Physical AI will emerge as a unified platform category, like smartphones or cloud computing. If, instead, it fragments into sector-specific solutions, or if NVIDIA’s largest customers refuse to depend on it, the strategy weakens considerably.

Table Of Content

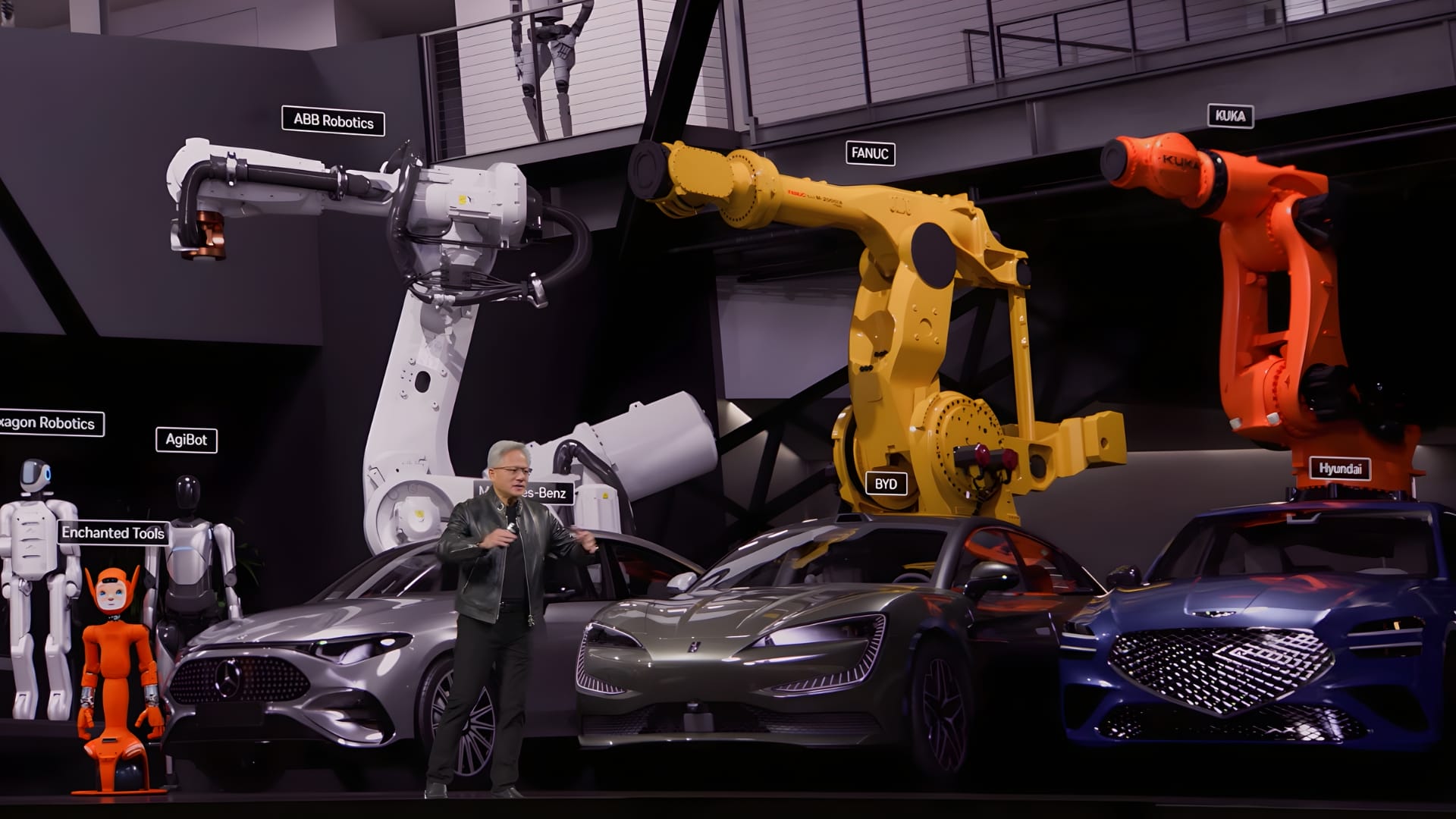

The announcements included Cosmos, a suite of foundation models for spatial reasoning, and Dynamo, a framework for distributed inference. A new computing platform called Vera Rubin replaces Blackwell and integrates hardware from Groq, the inference specialist NVIDIA acquired in late 2025. AI-RAN embeds inference workloads into cellular networks, while the DRIVE Hyperion 1.5 platform introduces Alpamayo, a Vision-Language-Action model for autonomous vehicles that generates explainable driving decisions. Partnerships with Hyundai, Kia, and BYD provide scale in Asian manufacturing.

While technically coherent, NVIDIA’s vision collides with an enterprise mandate for vendor diversification. The more ‘seamless’ the ecosystem becomes, the higher the barrier to entry for enterprises wary of a single-vendor future.

The software layer as a strategic lock-in

Cosmos, as the foundation, is a collection of models designed to simulate and reason about physical environments, tracking spatial relationships, object permanence, and physical dynamics. Unlike language models that predict the next token, these systems process sensor streams, simulate consequences, and make decisions within safety and latency constraints. NVIDIA argues that embodied AI cannot be built solely on transformer architectures. Cosmos is meant to provide the spatial reasoning layer that robotics, autonomous systems, and industrial applications require. Enterprises that adopt it face significant switching costs. Those who build their own world models treat Cosmos as a reference point, not infrastructure.

While Cosmos provides the world model, Dynamo sits above Cosmos as the operating system for inference. It manages workloads across distributed GPU clusters, routing requests dynamically to maintain low latency. This is where NVIDIA establishes operational dependency. Enterprises that deploy Dynamo hand over infrastructure orchestration to NVIDIA’s software stack. The performance advantages may justify that trade-off, but it also means losing architectural flexibility. Cloud providers have historically resisted this kind of vertical integration, preferring open-source tools like vLLM that allow customisation. Whether AWS, Google Cloud, or Microsoft Azure adopt Dynamo at scale will determine if NVIDIA achieves platform control or remains a hardware vendor with adjacent software.

This drive for platform control extends beyond software into the model layer itself via the Nemotron Coalition. The Nemotron Coalition, a partnership with AI labs including Mistral AI and Perplexity, functions as a strategic hedge. NVIDIA positioned the coalition as support for open-source frontier models, ensuring its hardware remains relevant regardless of which architecture enterprises choose. Its primary objective is to safeguard against the enclosure of the model layer by proprietary giants like OpenAI or Anthropic. If closed models dominate, NVIDIA risks becoming less relevant at the model layer. The coalition ensures that even if the model layer fragments, the infrastructure remains tied to NVIDIA. The coalition is less an act of altruism than one of survival; it ensures that even in a fragmented model landscape, NVIDIA remains the industry’s gravitational centre.

Infrastructure constraints and the integration trade-off

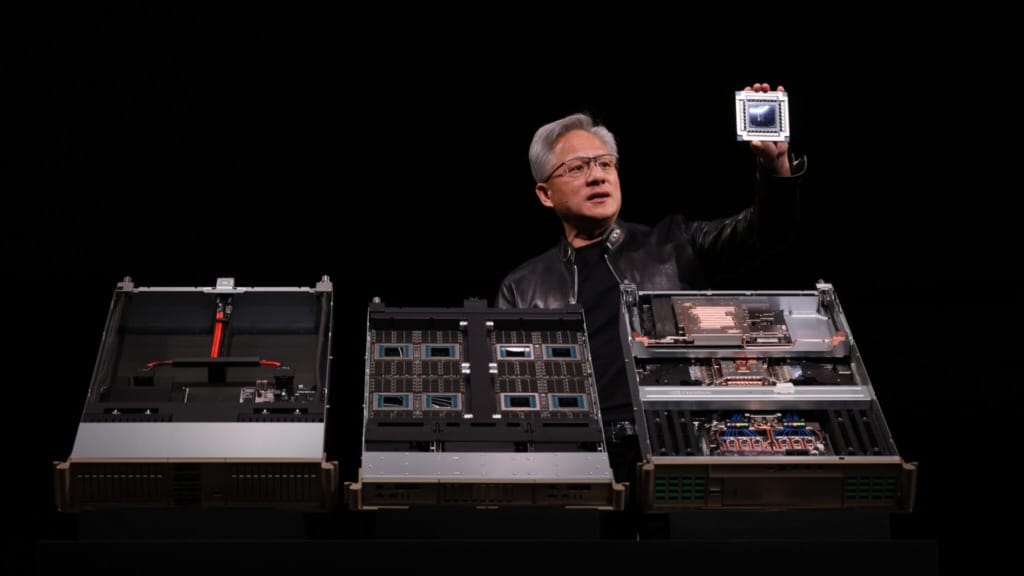

Vera Rubin represents a departure from NVIDIA’s traditional merchant silicon model. The platform integrates the Vera CPU with Groq 3 LPX racks, splitting inference workloads across chips optimised for the prefill and decode stages. This heterogeneous approach prioritises memory bandwidth over raw compute, which matters for agentic AI workloads. NVIDIA claims a fiftyfold improvement in tokens per watt. If accurate, that shifts the economics of inference substantially. Until Vera Rubin systems reach production deployments, the performance claims remain projections. More importantly, the architecture forces dependency. Enterprises cannot easily swap components or mix vendors. They accept the integrated stack or walk away.

This push for dependency extends to the network edge through AI-RAN. It places inference workloads directly into 5G and 6G radio access networks, allowing mobile devices to offload tasks to nearby cell towers rather than routing them to distant data centres or processing them locally. Partnering with T-Mobile for deployment, the advantages are tangible: lower latency, reduced power consumption on handsets, and better utilisation of cellular infrastructure. However, this ambition faces significant structural friction. Telecom deployment cycles are notoriously glacial, requiring coordination across a fragmented landscape of vendors and regulators. Furthermore, existing cell sites—designed for connectivity, not high-density compute—frequently lack the thermal and power envelopes required for sustained inference. Qualcomm and Ericsson pose formidable competition, making NVIDIA not the default choice. Its leverage depends entirely on Dynamo becoming the standard orchestration layer; without it, AI-RAN is simply a hardware alternative rather than a dominant platform.

This push for platform control culminates in the data centre, which NVIDIA has strategically rebranded as the ‘AI Factory.’ This framing treats inference as an industrial manufacturing process in which models serve as inputs, tokens as raw materials, and outputs as products. This terminology frames vertical integration as a structural necessity rather than a vendor preference. Factories require standardised processes, tight tolerances, and controlled environments. This justifies reduced flexibility as the necessary price for performance, making the ‘AI Factory’ NVIDIA’s closing argument for vertical integration. If it succeeds, the data centre is no longer a collection of parts, but a single, indivisible product.

The automotive partnerships and the competitor problem

The DRIVE Hyperion platform update centres on Alpamayo 1.5, a Vision-Language-Action model that generates driving decisions and auditable explanations using chain-of-thought reasoning. This addresses a genuine barrier to Level 4 autonomy. Regulators and insurers require transparent decision traces, particularly in edge cases and accidents. Most neural networks produce outputs without clear reasoning paths. If Alpamayo delivers credible explanations rather than post-hoc rationalisations, it could accelerate regulatory approvals for autonomous fleets, especially in dense urban markets across Asia, where adoption has been slower than anticipated.

Strategic partnerships with Hyundai, Kia, and BYD provide the necessary scale, yet they also introduce a fundamental contradiction. These are high-volume manufacturers with substantial operations across Asia. BYD is particularly significant because it has been investing heavily in developing its own AI stack. Tesla is further ahead and has built a proprietary full-stack system. NVIDIA needs these partnerships to establish market presence, but the partners are also competitors, building the capability to replace NVIDIA entirely. Every vehicle that ships with DRIVE Hyperion trains these manufacturers on what a full autonomy stack requires. The more successful NVIDIA is in the near term, the greater its risk of obsolescence in the long term as automakers bring development in-house.

This dynamic is not unique to the automotive industry. It mirrors the tension with cloud providers, who have used NVIDIA GPUs to build hyperscale AI infrastructure while simultaneously designing custom silicon to reduce dependency. The difference is that automotive manufacturers lack the chip design expertise that cloud providers possess. NVIDIA’s window may be longer, but the direction is the same. The partnerships look strong now. Whether they hold depends on how quickly automakers can replicate the stack and whether regulatory requirements create dependency that outlasts the technical advantage.

Acceptance risk, not execution risk

The GTC 2026 strategy assumes Physical AI will consolidate into a platform category where vertical integration delivers decisive advantages. NVIDIA is building towards that outcome with Cosmos as the model layer, Dynamo as the operating system, Vera Rubin as the silicon, and sector-specific products like AI-RAN and DRIVE Hyperion as the deployment vehicles. The architecture is coherent, and the question is whether the market structure supports it.

Physical AI may fragment instead. Automotive, robotics, and telecommunications have distinct regulatory environments, safety requirements, latency tolerances, and economics. A unified platform may deliver less value than tailored solutions. If that happens, NVIDIA’s vertical integration becomes a liability rather than an advantage. Customers will prefer modular components that can be adapted to sector-specific needs.

The larger risk is competitive. Tesla and BYD are developing in-house full-autonomy stacks. Cloud providers are designing custom silicon and building orchestration layers that reduce reliance on NVIDIA software. Telecom operators are evaluating alternatives from Qualcomm and Ericsson. NVIDIA’s historical strength has been neutrality: it sold to everyone without competing directly. That model is ending. The shift to platform ownership puts NVIDIA in direct competition with its largest customers. Whether they accept that arrangement depends on how large the performance gap remains and whether they believe they can close it themselves. Execution matters, but acceptance matters more.